Cayman Professor heads US National Council’s report on unmanned aircraft –pose serious safety concerns

Overcoming barriers to successful use of autonomous unmanned aircraft

From Science Daily

While civil aviation is on the threshold of potentially revolutionary changes with the emergence of increasingly autonomous unmanned aircraft, these new systems pose serious questions about how they will be safely and efficiently integrated into the existing civil aviation structure, says a new report from the National Research Council. The report identifies key barriers and provides a research agenda to aid the orderly incorporation of unmanned and autonomous aircraft into public airspace.

“There is little doubt that over the long run the potential benefits of advanced unmanned aircraft and other increasingly autonomous systems to civil aviation will indeed be great, but there should be equally little doubt that getting there while maintaining the safety and efficiency of the nation’s civil aviation system will be no easy matter,” said John-Paul Clarke, co-chair of the committee that wrote the report and associate professor of aerospace engineering at the Georgia Institute of Technology.

The report uses the term “increasingly autonomous” systems to describe a spectrum of technologies, from unmanned aircraft that are piloted remotely — which describes most such aircraft currently in use — to advanced autonomous systems for unmanned aircraft that would adapt to changing conditions and require little or no human intervention. Increasingly autonomous systems could also be used in crewed aircraft and air traffic management systems to lessen the need for human monitoring and control.

Development of such systems is accelerating, prompted by the promise of a range of applications, such as unmanned aircraft that could be used to dust crops, monitor traffic, or execute dangerous missions currently undertaken by crewed planes, such as fighting forest fires. The FAA currently prohibits commercial use of unmanned aircraft without a waiver or special authorization.

NASA’s Aeronautics Research Mission Directorate requested that the Research Council convene a committee to develop a national research agenda for autonomy in civil aviation.

For more on this story go to: http://www.sciencedaily.com/releases/2014/06/140605113709.htm

The following are excerpts only from the report that runs to 82 pages. The whole report can be downloaded from The National Academies Press at http://www.nap.edu/catalog.php?record_id=18815

Autonomy Research for Civil Aviation: Toward a new era of flight

EDITOR: The USA’s National Research Council (NRC) has just produced (2014) a report on Autonomy Research (AR). The NRC was organized by the National Academy of Sciences in 1916 to associate the broad community of science and technology with the Academy’s purposes of furthering knowledge and advising the federal government.

The Committee on Autonomy Research for Civil Aviation was headed by JOHN-PAUL B CLARKE , Georgia Institute of Technology, and JOHN K. LAUBER, Consultant, as Co-chairs.

Preface

Technological advances in computer processing, sensors, networking, and other areas are facilitating the development and operation of increasingly autonomous (IA) systems and vehicles for a wide variety of applications on the ground, in space, at sea, and in the air. IA systems have the potential to improve safety and reliability, reduce costs, and enable new missions. However, deploying IA systems is not without risk. In particular, failure to implement IA systems in a careful and deliberate manner could potentially reduce safety and/or reliability and increase life-cycle costs. These factors are especially critical to civil aviation given the very high standards for safety and reliability and the risk to public safety that occurs whenever the performance of new civil aviation technologies or systems fall short of expectations.

Research and technology development plays a critical role in determining the effectiveness of IA systems and the pace at which they advance. In addition, a wide variety of organizations possess key expertise and are making advances in technologies directly related to the advancement of IA systems for civil aviation. Accordingly, NASA’s Aeronautics Research Mission Directorate requested that the NRC convene a committee to develop a national research agenda for autonomy in civil aviation. In response, the Aeronautics and Space Engineering Board of the Division on Engineering and Physical Sciences, with the assistance of the Board on Human–Systems Integration of the Division of Behavioral and Social Sciences and Education, assembled a committee to carry out the assigned statement of task. As specified in that statement, the committee developed a research agenda consisting of a prioritized set of research projects that, if completed by NASA and other interested parties, would enable concepts of operation for the National Airspace System, where systems and aircraft with various autonomous capabilities are able to operate in harmony; demonstrate IA capabilities for crewed and unmanned aircraft; predict the system- level effects of incorporating IA systems and aircraft in the National Airspace System; and define approaches for verification, validation, and certification of IA systems. The committee was also tasked with describing contributions that advances in autonomy could make to civil aviation and the technical and policy barriers that must be overcome to fully and effectively implement IA systems in civil aviation. The scope of this study does not include organizational recommendations.

BOX S.1

Civil Aviation

In this report, “civil aviation” is used to refer to all nonmilitary aircraft operations in U.S. civil airspace. This includes operations of civil aircraft as well as nonmilitary public use aircraft (that is, aircraft owned or operated by federal, state, and local government agencies other than the Department of Defense). In addition, many of the IA technologies that would be developed by the recommended research projects would generally be applicable to military crewed and/or unmanned aircraft for military operations and/or operations in the NAS.

BOX S.2

Increasingly Autonomous Systems

A fully autonomous aircraft would not require a pilot; it would be able to operate independently within civil airspace, interacting with air traffic controllers and other pilots just as if a human pilot were on board and in command. Similarly, a fully autonomous ATM system would not require human air traffic controllers. This study is not focused on those extremes (although it does sometimes address the needs or qualities of fully autonomous unmanned aircraft). Rather, the report primarily addresses what the committee calls “increasingly autonomous” (IA) systems, which lie along the spectrum of system capabilities that begin with the abilities of current automatic systems, such as autopiloted and remotely piloted (nonautonomous) unmanned aircraft and progress toward the highly sophisticated systems that would be needed to enable the extreme cases. Some IA systems, particularly adaptive/nondeterministic IA systems, lie farther along this spectrum than others, and in this report such systems are typically described as “advanced IA systems.”

BOX S.4

Unmanned Aircraft / Crewed Aircraft

An unmanned aircraft is “a device used or intended to be used for flight in the air that has no onboard pilot. This device excludes missiles, weapons, or exploding warheads, but includes all classes of airplanes, helicopters, airships, and powered-lift aircraft without an onboard pilot. Unmanned aircraft do not include traditional balloons (see 14 CFR Part 101), rockets, tethered aircraft and un-powered gliders.” A UAS is “an unmanned aircraft and its associated elements related to safe operations, which may include control stations (ground-, ship-, or air-based), control links, support equipment, payloads, flight termination systems, and launch/recovery equipment” (Integration of Civil Unmanned Aircraft Systems [UAS] in the National Airspace System [NAS] Roadmap, FAA, 2013). UAS include the data links and other communications systems used to connect the UAS control station, unmanned aircraft, and other elements of the NAS, such as ATM systems and human operators. Unless otherwise specified, UAS are assumed to have no humans on board either as flight crew or as passengers. “Crewed aircraft” is used to denote manned aircraft; unless specifically noted otherwise; manned aircraft are considered to have a pilot on board.

BOX S.5

Adaptive/Nondeterministic Systems

Adaptive systems have the ability to modify their behavior in response to their external environment. For aircraft systems, this could include commands from the pilot and inputs from aircraft systems, including sensors that report conditions outside the aircraft. Some of these inputs, such as airspeed, will be stochastic because of sensor noise as well as the complex relationship between atmospheric conditions and sensor readings not fully captured in calibration equations. Adaptive systems learn from their experience, either operational or simulated, so that the response of the system to a given set of inputs varies and, presumably, improves over time.

Systems that are nondeterministic may or may not be adaptive. They may be subject to the stochastic influences imposed by their complex internal operational architectures or their external environment, meaning that they will not always respond in precisely the same way even when presented with identical inputs or stimuli. The software that is at the heart of nondeterministic systems is expected to enable improved performance because of its ability to manage and interact with complex “world models” (large and potentially distributed data sets) and execute sophisticated algorithms to perceive, decide, and act in real time.

Systems that are adaptive and nondeterministic demonstrate the performance enhancements of both. Many advanced IA systems are expected to be adaptive and/or nondeterministic, and issues associated with the development and deployment of these adaptive/nondeterministic systems are discussed later in the report.

BOX S.6

Operators

In this report, the term “operator” generally refers to pilots, air traffic controllers, airline flight operations staff, and other personnel who interact directly with IA civil aviation systems. “Pilot” is used when referring specifically to the operator of a crewed aircraft. With regard to unmanned aircraft, the FAA says that “in addition to the crewmembers identified in 14 CFR Part 1 [pilots, flight engineers, and flight navigators], a UAS flight crew includes pilots, sensor/payload operators, and visual observers, but may include other persons as appropriate or required to ensure safe operation of the aircraft” (Integration of Civil Unmanned Aircraft Systems [UAS] in the National Airspace System [NAS] Roadmap, FAA, 2013). Given that the makeup, certification requirements, and roles of UAS flight crews are likely to evolve as UAS acquire advanced IA capabilities, this report refers generally to UAS operators as flight crew rather than specifically as pilots.

Summary

The development and application of increasingly autonomous (IA) systems for civil aviation (see Boxes S.1 and S.2) are proceeding at an accelerating pace, driven by the expectation that such systems will return significant benefits in terms of safety, reliability, efficiency, affordability, and/or previously unattainable mission capabilities. IA systems, characterized by their ability to perform more complex mission-related tasks with substantially less human intervention for more extended periods of time, sometimes at remote distances, are being envisioned for aircraft and for air traffic management (ATM) and other ground-based elements of the National Airspace System (NAS) (see Box S.3). This vision and the associated technological developments have been spurred in large part by the convergence of the increased availability of low-cost, highly capable computing systems, digital communications, sensor technology, and position, navigation, and timing systems (e.g., the Global Positioning System, GPS); and open-source hardware and software.

These technology enablers, coupled with expanded use in military operations and the emergence of an active and growing community of hobbyists that is developing and operating small unmanned aircraft systems (UAS), provide fertile ground for innovation and entrepreneurship (see Box S.4). The burgeoning industrial sector devoted to the design, manufacture, and sales of IA systems is indicative of the perceived economic opportunities that will arise. In short, civil aviation is on the threshold of potentially revolutionary changes in aviation capabilities and operations associated with IA systems. These systems, however, pose serious unanswered questions about how to safely integrate these revolutionary technological advances into a well-established, safe, and efficiently functioning NAS governed by operating rules that can only be changed after extensive deliberation and consensus. In addition, the potential benefits that could accrue from the introduction of advanced IA systems in civil aviation, the associated costs, and the unintended consequences that are likely to arise will not fall on all stakeholders equally. This report suggests major elements of a national research agenda for autonomy in civil aviation that would inform and support the orderly implementation of IA systems in U.S. civil aviation. The scope of this study does not include organizational recommendations.

BARRIERS TO INCREASED AUTONOMY IN CIVIL AVIATION

The Committee on Autonomy Research for Civil Aviation has identified many substantial barriers to the increased use of autonomy in civil aviation systems and aircraft. These barriers cover a wide range of issues related to understanding, developing, and deploying IA ground and aircraft systems. Some of these issues are technical, some are related to certification and regulation, and some are related to legal and social concerns.

[LISTED]

The committee did not individually prioritize these barriers. However, there is one critical, crosscutting challenge that must be overcome to unleash the full potential of advanced IA systems in civil aviation. This challenge may be described in terms of the question “How can we assure that advanced IA systems—especially those systems that rely on adaptive/nondeterministic software—will enhance rather than diminish the safety and reliability of the NAS?” There are four particularly challenging barriers that stand in the way of meeting this key challenge:

. Certification process

. Decision making by adaptive/nondeterministic systems

. Trust in adaptive/nondeterministic IA systems

. Verification and validation

ELEMENTS OF A NATIONAL RESEARCH AGENDA FOR AUTONOMY IN CIVIL AVIATION

The committee identified eight high-level research projects that would address the barriers discussed above. The committee also identified several specific areas of research that could be included in each research project.

[LISTED]

FOUR MOST URGENT AND MOST DIFFICULT RESEARCH PROJECTS

Behavior of Adaptive/Nondeterministic Systems. Develop methodologies to characterize and bound the behavior of adaptive/nondeterministic systems over their complete life cycle.

Adaptive/nondeterministic properties will be integral to many advanced IA systems, but they will create challenges for assessing and setting the limits of their resulting behaviors. Advanced IA systems for civil aviation operate in an uncertain environment where physical disturbances, such as wind gusts, are often modeled using probabilistic models. These IA systems may rely on distributed sensor systems that have noise with stochastic properties such as uncertain biases and random drifts over time and varying environmental conditions. To improve performance, adaptive/nondeterministic IA systems will take advantage of evolving conditions and past experience to adapt their behavior; that is, they will be capable of learning. As these IA systems take over more functions traditionally performed by humans, there will be a growing need to incorporate autonomous monitoring and other safeguards to ensure continued appropriate operational behavior.

There is tension between the benefits of incorporating software with adaptive/nondeterministic properties in IA systems and the requirement to test such software for safe and assured operation. Research is needed to develop new methods and tools to address the inherent uncertainties in airspace system operations and thereby enable more complex adaptive/nondeterministic IA systems with the ability to adapt over time to improve their performance and provide greater assurance of safety.

Specific tasks to be carried out by this research project include the following:

[LISTED]

Operation Without Continuous Human Oversight. Develop the system architectures and technologies that would enable increasingly sophisticated IA systems and unmanned aircraft to operate for extended periods of time without real-time human cognizance and control.

Crewed aircraft have systems with varying levels of automation that can and operate without continuous human oversight unless that system fails or malfunctions. Even so, pilots are expected to maintain continuous cognizance and control over the aircraft as a whole. Advanced IA systems could allow unmanned aircraft to operate for extended periods of time without the need for human operators to monitor, supervise, and/or directly intervene in the operation of those systems in real time. This requires that certain critical system functions currently provided by humans, such as “detect and avoid,” performance monitoring, subsystem anomaly and failure detection, and contingency decision making, will need to be accomplished by the IA systems during periods when the system operates unattended. Eliminating the need for continuous cognizance and control of unmanned aircraft operations would enable unmanned aircraft to take on new roles that are not practical or cost-effective with continuous oversight. This capability also can improve safety for crewed operations in situations where risk to a human operator is unacceptably high, workload is too heavy, or the task too monotonous to expect continuous operator vigilance. Successful development of an unattended operational capability depends on understanding how humans perform their roles in the present system and how these roles are translated to the IA system, particularly for high-risk situations. Eliminating the need for continuous human cognizance and control requires a system architecture that also supports intermittent human cognizance and control.

[SPECIFIC TASKS LISTED]

Modeling and Simulation. Develop the theoretical basis and methodologies for using modeling and simulation to accelerate the development and maturation of advanced IA systems and aircraft.

Modeling and simulation capabilities will play an important role in the development, implementation, and evolution of IA systems in civil aviation because they enable researchers, designers, regulators, and operators to get information about how something performs without actually testing it in real life. Modeling and simulation enable insights into component and system performance without necessarily engendering the expense and risk associated with actual operations. For example, computer simulations may be able to test the performance of some IA systems in literally millions of scenarios in a short time to produce a statistical basis for determining safety risks and establishing the confidence of IA system performance. Researchers and designers are also likely to make use of modeling and simulation capabilities to evaluate design alternatives. Developers of IA systems will be able to train adaptive (i.e., learning) algorithms through repeated operations in simulation. Modeling and simulation capabilities could also be used to train human operators. The committee envisions the creation of a distributed suite of modeling and simulation modules developed by disparate organizations with the ability to be interconnected or networked, as appropriate, based on established standards. The committee believes that monolithic modeling and simulation efforts that are intended to develop capabilities that can “do it all”` and answer any and all questions tend to not be effective due to limitations in access and availability; the higher cost of creating, employing, and maintaining them; the complexity of their application, which constrains their use; and the centralization of development risks. Given the importance of modeling and simulation capabilities to the creation, evaluation, and evolution of IA systems, mechanisms will be needed to ensure that these capabilities perform as intended. A process for accrediting models and simulations will also be required.

[SPECIFIC TASKS LISTED]

Verification, Validation, and Certification. Develop standards and processes for the verification, validation, and certification of IA systems and determine their implications for design.

The high levels of aviation system safety achieved in the operation of the NAS largely reflect the formal requirements imposed by the FAA for verification, validation, and certification (VV&C) of hardware and software and the certification of personnel as a condition for entry into the system. These processes have evolved over many decades and represent the cumulative experience of all elements of civil aviation—manufacturers, regulators, pilots, controllers, other operators—in the operation of that system. Although viewed by some as unnecessarily cumbersome and expensive, VV&C processes are critical to the continued safe operation of the NAS. However, extension of these concepts and principles to advanced IA systems is not a simple matter and will require the development of new approaches and tools. Furthermore, the broad range of aircraft sizes, masses, and capabilities envisioned in future civil aviation operations may present opportunities to reassess the current safety and reliability criteria for various components of the aviation system. As was done in the past during the introduction of major new technologies, such as fly-by-wire and composite materials, the FAA will need to develop technical competency in IA systems and issue guidance material and new regulations to enable safe operation of all classes and types of IA systems.

[SPECIFIC TASKS LISTED]

ADDITIONAL HIGH-PRIORITY RESEARCH PROJECTS

Nontraditional Methodologies and Technologies. Develop methodologies for accepting technologies not traditionally used in civil aviation (e.g., open-source software and consumer electronic products) in IA systems.

Open-source hardware and software are being widely used in the rapidly evolving universe of IA systems. This is particularly, but not uniquely, true in the active and growing community of hobbyists and prospective entrepreneurs who are developing and operating small unmanned aircraft. Separately, the automotive industry is deploying IA systems using verification and validation (V&V) methods different from the methods traditionally used in aviation. The committee believes that there are many potential safety and economic benefits that might be realized in the civil aviation environment by developing suitable methodology that would permit reliable, safe adoption of hardware and software systems of unknown provenance. Although these issues are closely related to issues of V&V and certification, the committee believes they merit independent research attention. This might open up new opportunities for the beneficial deployment of technologies that fall outside traditional uses and applications.

[SPECIFIC TASKS LISTED]

Roles of Personnel and Systems. Determine how the roles of key personnel and systems, as well as related human–machine interfaces, should evolve to enable the operation of IA systems.

Effectively integrating humans and machines in the civil aviation system has been a high priority and sometimes an elusive design challenge for decades. Human–machine integration may become an even greater challenge with the advent of advanced IA systems. Although the reliance on high levels of automation in crewed aircraft has increased the overall levels of system safety, persistent and seemingly intractable issues arise in the context of incidents and accidents. Typically, pilots experience difficulty in developing and maintaining an appropriate mental model of what the automation is doing at any given time. Maintaining an awareness of the operational mode of key automated systems can become especially problematic in dynamic situations. Advanced IA systems will change the specifics of the human performance required by such systems, but it remains to be seen if cognitive requirements for human operators will be more or less stringent. Not only are there significant issues surrounding the proper roles and responsibilities of humans in such systems, but there are also important new questions about the properties and characteristics of the human–machine interface posed by the adaptive/nondeterministic behavior of these systems. The committee believes that in many ways, these are a logical extension of the age-old questions about duties, responsibilities, and skills and training required for pilots, air traffic controllers, and other humans in the system. However, advanced IA systems may permit, or even require, new roles and radical realignment of the more traditional roles of such human actors to achieve some of the benefits envisioned. The importance of these issues cannot be overstated, and, again, realization of projected benefits of autonomy will be constrained by failure to address the issues through research.

[SPECIFIC TASKS LISTED]

Safety and Efficiency. Determine how IA systems could enhance the safety and efficiency of the civil aviation system.

As with other new technologies, poorly implemented IA systems could put at risk the high levels of efficiency and safety that are the hallmarks of civil aviation, particularly for commercial air transportation. However, done properly, advances in IA systems could enhance both the safety and the efficiency of civil aviation. For example, IA systems have the potential to reduce reaction times in safety- critical situations, especially in circumstances that today are encumbered by the requirement for human-

to-human interactions. The ability of IA capabilities to rapidly cue operators or potentially render a fully autonomous response in safety-critical situations could improve both safety and efficiency. IA systems could substantially reduce the frequency of those classes of accidents typically ascribed to operator error. This could be of particular value in the segments of civil aviation, such as general aviation and medical evacuations by helicopter, that have much higher accident rates than commercial air transports.

Whether located on board an aircraft or in ATM centers, IA systems also have the potential to reduce manpower requirements, thereby increasing the efficiency of operations. If realized, this benefit also brings the concomitant attribute of lower operating costs.

In instances where IA systems make it possible for small unmanned aircraft to replace crewed aircraft, the risks to persons and property on the ground in the event of an accident could be greatly reduced, owing to the reduced damage footprint in those instances, and the risk to air crew is eliminated entirely.

[SPECIFIC TASKS LISTED]

Stakeholder Trust. Develop processes to engender broad stakeholder trust in IA systems in the civil aviation system.

IA systems can fundamentally change the relationship between people and technology, and one important dimension of that relationship is trust. Although increasingly used as an engineering term in the context of software and security assurance, trust is above all a social term and becomes increasingly relevant to human–technology relationships when complexity thwarts the ability to fully understand a technology’s behavior. Trust is not a trait of the system; it is the system status in the mind of human beings based on their perception of and experience with the system. Trust concerns the attitude that a person or technology will help achieve specific goals in a situation characterized by uncertainty and vulnerability.1 It is the perception of trustworthiness that influences how people respond to a system.

Although closely related to V&V and certification, trust warrants attention as a distinct research topic because formal certification does not guarantee trust and eventual adoption. Stakeholder trust is also tied to cybersecurity and related issues; trustworthiness depends not only on designers’ intents but also on the degree to which the design prevents either inadvertent or intentional corruption of system data and processes.

[SPECIFIC TASKS LISTED]

COORDINATION OF RESEARCH AND DEVELOPMENT

All of the research projects described above can and should be addressed by multiple organizations in the federal government, industry, and academia.

The roles of academia and industry would be essentially the same for each research project because of the nature of the role they play in the development of new technologies and products.

The FAA would be most directly engaged in the VV&C research project, because certification of civil aviation systems is one of its core functions. However, the subject matters of most of the other research projects are also related to certification directly or indirectly, so the FAA would be ultimately be interested in the progress and results of those other projects as well.

The Department of Defense (DOD) is primarily concerned with military applications of IA systems, though it must also ensure that military aircraft with IA systems that are based in the United States satisfy requirements for operating in the NAS. Its interests and research capabilities coincide with all eight research projects, especially with those on the roles of personnel and systems and operation without continuous human oversight.

The National Aeronautics and Space Administration (NASA) supports basic and applied research in civil aviation technologies, including ATM technologies of interest to the FAA. Its interests and research capabilities also encompass the scope of all eight research projects, particularly modeling and simulation, nontraditional methodologies and technologies, and safety and efficiency.

Each of the high-priority research projects overlaps to some extent with one or more of the others. Even looked at individually, each would be best addressed by multiple organizations working in concert. There is already some movement in that direction.

The FAA has created the Unmanned Aircraft Systems Integration Office to foster collaboration with a broad spectrum of stakeholders, including DOD, NASA, industry, academia, and technical standards organizations. In 2015, the FAA will establish an air transportation center of excellence for UAS research, engineering, and development…………more

CONCLUDING REMARKS

Civil aviation in the United States and elsewhere in the world is on the threshold of profound changes in the way it operates because of the rapid evolution of IA systems. Advanced IA systems will, among other things, be able to operate without direct human supervision or control for extended periods of time and over long distances. As happens with any other rapidly evolving technology, early adapters

sometimes get caught up in the excitement of the moment, producing a form of intellectual hyperinflation that greatly exaggerates the promise of things to come and greatly underestimates costs in terms of money, time, and—in many cases—unintended consequences or complications. While there is little doubt that over the long run the potential benefits of IA in civil aviation will indeed be great, there should be equally little doubt that getting there, while maintaining or improving the safety and efficiency of the nation’s civil aviation system, will be no easy matter. Furthermore, given that the potential benefits of advanced IA systems—as well as the unintended consequences—will inevitably accrue to some stakeholders much more than others, the enthusiasm of the latter for fielding such systems could be limited. In any case, overcoming the barriers identified in this report by pursuing the research agenda proposed by the committee is a vital next step, although more work beyond the issues identified here will certainly be needed as the nation ventures into this new era of flight.

Findings and Recommendation

Finding. Potential Benefits and Risks. The intensity and extent of autonomy-related research, development, implementation, and operations in the civil aviation sector suggest that there are several potential benefits to increased autonomy for civil aviation. These benefits include but are not limited to improved safety and reliability, reduced acquisition and operational costs, and expanded operational capabilities. However, the extent to which these benefits are realized will be greatly dependent on the degree to which the barriers that have been identified are overcome, the extent to which military expertise and systems can be leveraged, and the extent to which government and nongovernment efforts are coordinated.

Finding. Barriers. There are many substantial barriers to the increased use of autonomy in civil aviation systems and aircraft:

. Technology Barriers

Communications and data acquisition,

Cyberphysical security,

Diversity of aircraft,

Human–machine integration,

Decision making by adaptive/nondeterministic systems,

Sensing, perception, and cognition,

System complexity and resilience, and

Verification and validation.

. Regulation and Certification Barriers

Airspace access for unmanned aircraft,

Certification process,

Equivalent level of safety, and

Trust in adaptive/nondeterministic IA systems.

. Additional Barriers

Legal issues and Social issues.

A Statement of Task

The National Research Council will appoint an ad-hoc committee to develop a national research agenda for autonomy in civil aviation, comprised of a prioritized set of integrated and comprehensive technical goals and objectives of importance to the civil aeronautics community and the nation. The elements of the recommended research agenda for autonomy in civil aviation will be evolved from the existing state of the art, scientific and technological requirements to advance the state of the art, potential user needs, and technical research plans, programs, and activities. In addition, the committee will consider the resources and organizational partnerships required to complete various elements of the agenda.

[LIST]

Related story:

Sikorsky launches Autonomy Research program

Sikorsky research program aims at autonomy for any vertical-lift vehicle, manned or unmanned

Autonomy does not have to mean unmanned, and the potential to increase safety, reliability and capability across a range of missions and platforms—including manned and optionally piloted—is behind Sikorsky’s launch of a multi-year research program to develop autonomous technology for vertical flight in demanding environments.

The Matrix Technology program is modeled on Sikorsky’s X2 Technology demonstration, a $50 million internally funded effort that culminated in 2010 with a coaxial-rotor compound helicopter achieving 250 kt. in level flight, and led to industry-funded development of the S-97 Raider light tactical helicopter. As with the X2, the Matrix program has key performance parameters (KPP), milestones and deliverables—including the first flight last week of an S-76 demonstrator in autonomous mode. This is to be followed in the fourth quarter by an unmanned cargo mission with a UH-60MU fly-by-wire (FBW) Black Hawk.

For more on this story go to: http://aviationweek.com/awin/sikorsky-launches-autonomy-research-program

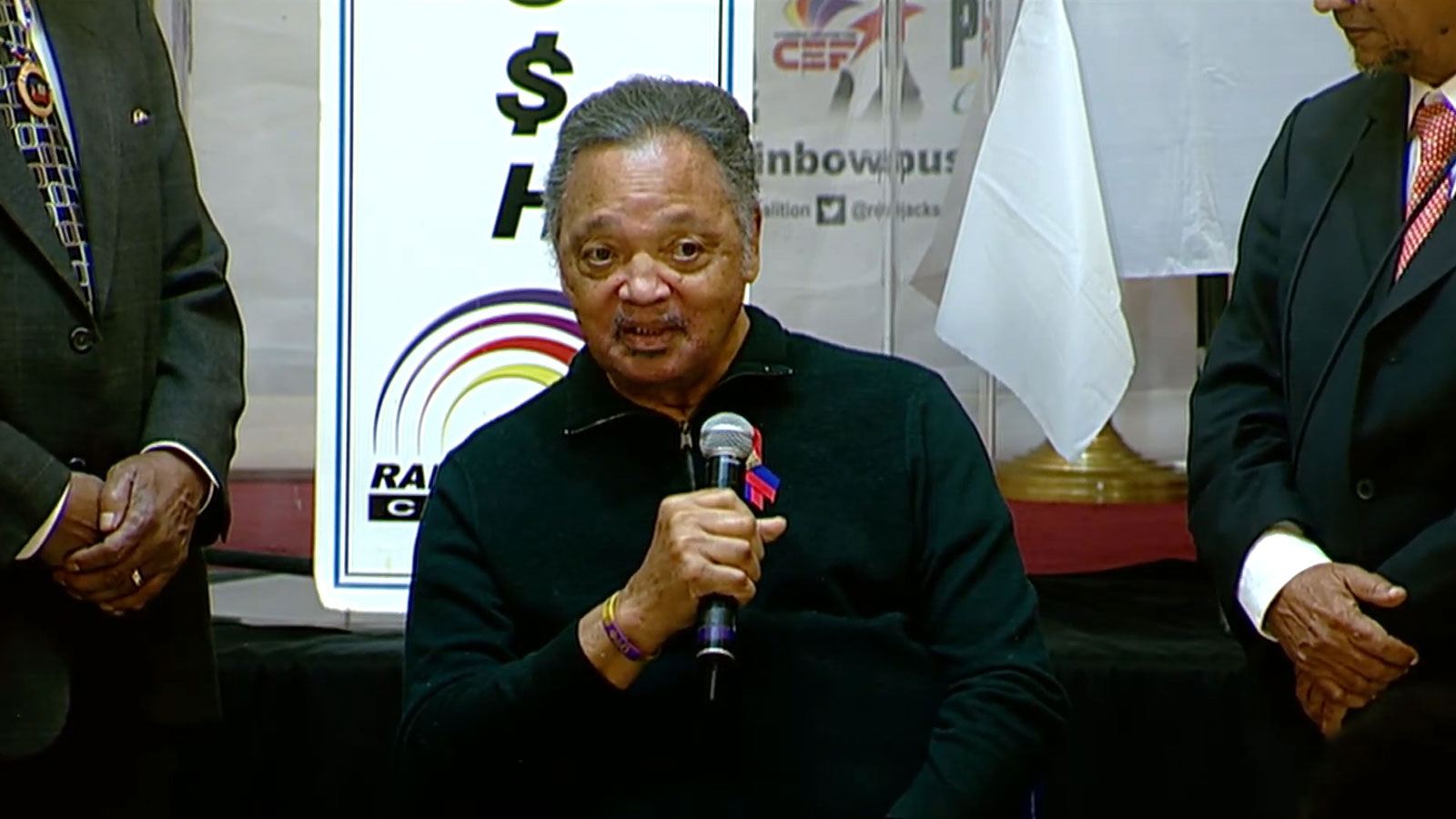

IMAGE: www.gtresearchnews.gatech.edu

John-Paul Clarke

From Georgia Tech College of Engineering

Associate Professor

John-Paul Clarke is an Associate Professor in the Daniel Guggenheim School of Aerospace Engineering with a courtesy appointment in the H. Milton Stewart School of Industrial and Systems Engineering, and Director of the Air Transportation Laboratory at the Georgia Institute of Technology. He received S.B. (1991), S.M. (1992), and Sc.D. (1997) degrees in aeronautics and astronautics from the Massachusetts Institute of Technology. His research and teaching in the areas of control, optimization, and system analysis, architecture, and design are motivated by his desire to simultaneously maximize the efficiency and minimize the societal costs (especially on the environment) of the global air transportation system.

Dr. Clarke has made seminal contributions in the areas of air traffic management, aircraft operations, and airline operations – the three key elements of the air transportation system – and has been recognized globally for developing, among other things, key analytical foundations for the Continuous Descent Arrival (CDA) and novel concepts for robust airline scheduling. His research has resulted in significant changes in engineering methods, processes and products – most notably the development of new arrival procedures for four major US airports and one European Airport, and changes in airline scheduling practices. He is an Associate Fellow of AIAA and a member of AGIFORS, INFORMS, and Sigma Xi. His many honors include the AIAA/AAAE/ACC Jay Hollingsworth Speas Airport Award in 1999, the FAA Excellence in Aviation Award in 2003, the National Academy of Engineering Gilbreth Lecturership in 2006, and the 37th SAE/AIAA William Littlewood Memorial Lecture Award (to be awarded in January 2012).

Dr. Clarke is a resident of Grand Cayman, Cayman Islands, and attends St. George’s Anglican (Episcopal) Church where he is a leading member of their choir.